Inverse Reinforcement Learning with Simultaneous Estimation of Rewards and Dynamics

Michael Herman, Tobias Gindele, Jörg Wagner, Felix Schmitt, Wolfram Burgard

Proceedings of the 19th International Conference on Artificial Intelligence and Statistics, 2016, Cadiz, Spain, May 9 – 11, 2016

Abstract:

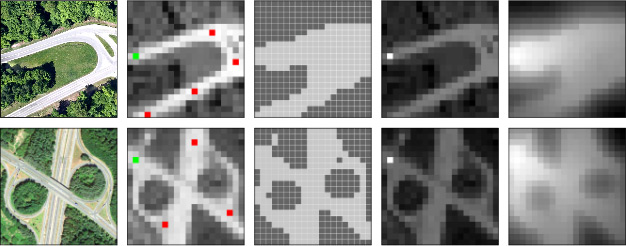

Inverse Reinforcement Learning (IRL) describes the problem of learning an unknown reward function of a Markov Decision Process (MDP) from observed behavior of an agent. Since the agent’s behavior originates in its policy and MDP policies depend on both the stochastic system dynamics as well as the reward function, the solution of the inverse problem is significantly influenced by both. Current IRL approaches assume that if the transition model is unknown, additional samples from the system’s dynamics are accessible, or the observed behavior provides enough samples of the system’s dynamics to solve the inverse problem accurately. These assumptions are often not satisfied. To overcome this, we present a gradient-based IRL approach that simultaneously estimates the system’s dynamics. By solving the combined optimization problem, our approach takes into account the bias of the demonstrations, which stems from the generating policy. The evaluation on a synthetic MDP and a transfer learning task shows improvements regarding the sample efficiency as well as the accuracy of the estimated reward functions and transition models.

@INPROCEEDINGS{Herman2016AISTATS,

author={Michael Herman and Tobias Gindele and Jörg Wagner and Felix Schmitt and Wolfram Burgard},

booktitle={Proceedings of the 19th International Conference on Artificial Intelligence and Statistics (AISTATS)},

title={Inverse Reinforcement Learning with Simultaneous Estimation of Rewards and Dynamics},

year={2016},

pages={102-110},

month={May},

}